About

In a race against AI-generated misinformation, 45 graduate students proved that real-world data and cross-disciplinary grit are the best defense for democratic integrity.

In March 2026, The Bright Initiative by Bright Data partnered with Georgetown’s Massive Data Institute (MDI) and IBM to host the first-ever Datathon for Democracy, empowering graduate students to use real-world public web data to build AI-driven prototypes for detecting deepfakes and strengthening election communication.

The Challenges

In today’s fragmented digital ecosystem – supercharged by generative AI tools that can produce convincing false content in seconds – information pollution on social media spreads faster than accurate information about voting and elections. Election officials, fact-checkers, and civic organizations struggle to keep pace. The question is no longer whether misinformation will appear, but whether authoritative voices can respond quickly and effectively enough to build community resilience.

The Datathon posed two interconnected challenges:

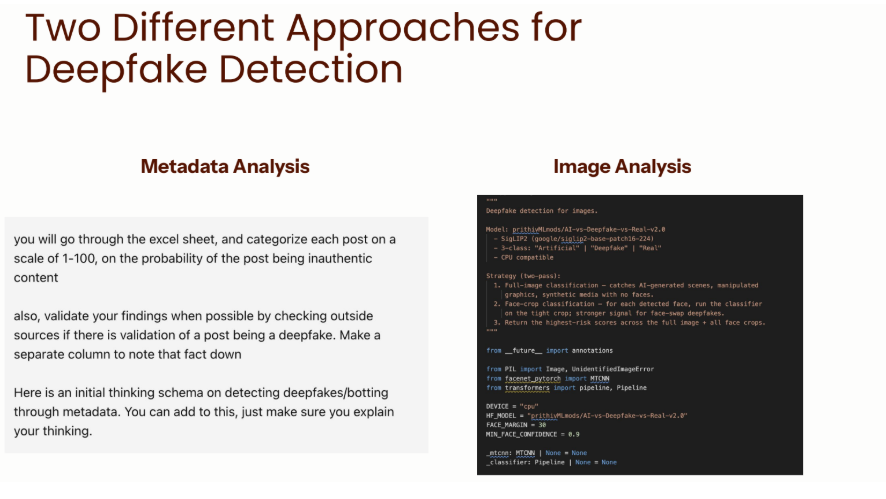

Challenge 1: Deepfake Detection

How can we identify synthetic media, manipulated content, and coordinated inauthentic behavior across social media platforms?

Challenge 2: Counterspeech & Prebunking

What communication strategies do election officials use to prebunk anticipated misinformation, debunk circulating falsehoods, and build trust in democratic processes—and where are the gaps?

How It Worked

Faculty from Georgetown’s Massive Data Institute – Associate Teaching Professor Thessalia Merivaki, Assistant Research Professor Sejin Paik, Director Lisa Singh and Associate Research Professor Renée DiResta – sorted 45 students into nine teams (five deepfake, four counterspeech), deliberately mixing skill levels so that each team included students with strong data science backgrounds alongside those bringing policy, legal, or subject-matter expertise.

Teams worked through a structured workflow:

1. Data Collection: Using Bright Data’s dataset marketplace, teams accessed over 70,000 social media posts from Facebook, X, Instagram, and YouTube—including communications from election officials, political candidates, and influencers during the 2024 and 2025 U.S. election cycles.

2. Classification & Labeling: Teams applied structured taxonomies to categorize content—whether identifying synthetic media signals for deepfakes or labeling official communications as prebunking, debunking, or trust-building messages.

3. Analysis & Pattern Detection: Using Python notebooks and statistical tools, teams identified patterns across platforms, jurisdictions, and time periods.

4. Agent Development: Teams explored IBM watsonx Orchestrate to build AI agents that could automate classification, detect coverage gaps, or generate recommendations for election officials.

The Results: Prototyping Solutions in Five Hours

First Place: Multimodal Deepfake Detection

The winning team developed a combined approach using both image analysis and metadata forensics to detect “inauthentic content.” By cross-referencing visual artifacts (lighting inconsistencies, facial boundary blur) with behavioral signals (account age, posting patterns, engagement ratios), their model flagged suspected synthetic media with higher confidence than either method alone.

Second Place: Hyperlocal Gap Analysis

A counterspeech team built a classification system using the EO Communications Tracker taxonomy to categorize official messages as prebunking, debunking, or trust-building content. They then conducted hyperlocal gap analysis, identifying jurisdictions where misinformation narratives were circulating but official responses were absent or delayed. Their “gap scorecard” could help election officials prioritize communications resources.

The Value of the Datathon Experience

Practical Tool Fluency: Students move beyond textbooks to gain hands-on experience with enterprise platform access to Bright Data solutions and watsonx Orchestrate, learning to extract value from large-scale, real-world datasets.

Collaborative Problem-Solving: By integrating technical rigor with policy context and cybersecurity insights, teams learn that the best solutions come from cross-disciplinary cooperation.

Professional Resilience: The intense, compressed timeline forces students to master labor division and rapid delivery—ensuring they can produce functional results with limited time.

“Working with people from [the fields of] Data Science, Public Policy, and Cybersecurity made me realize how much your background shapes the way you think about a problem. The interdisciplinary dynamic really made our work stronger, and seeing how the other teams approached the same challenge during the presentations reinforced that there is no single right way to look at these problems.” – Datathon participant